Artificial intelligence does not deliver business value in a notebook environment. Models trained on carefully curated datasets must ultimately be deployed, monitored, scaled, and maintained in real-world systems. This transition from experimentation to production is one of the most complex phases in the machine learning lifecycle. Tools like BentoML have emerged to address this challenge by standardizing how models are packaged and served, making AI deployment more reliable, portable, and secure.

TLDR: Deploying machine learning models into production is often more difficult than training them. Tools such as BentoML simplify this process by packaging models, dependencies, and APIs into portable services that can be deployed across environments. These platforms help organizations ensure reproducibility, scalability, and monitoring while reducing engineering overhead. As AI adoption grows, structured deployment solutions are becoming essential infrastructure.

Historically, machine learning engineers relied on ad hoc scripts, custom APIs, and environment-specific configurations to operationalize models. This approach often led to inconsistent performance, dependency conflicts, and significant friction between data science and DevOps teams. Modern deployment tools formalize the process by treating models as versioned, buildable artifacts—just like application code.

The Challenge of Moving from Research to Production

In controlled experiments, data scientists work within predictable environments. However, production systems introduce several complications:

- Dependency management: Library versions must match training environments.

- Scalability: APIs must handle fluctuating traffic loads.

- Latency constraints: Real-time inference requires performance optimization.

- Security: Endpoints must enforce authentication and data protection.

- Observability: Monitoring predictions and detecting drift is critical.

Without standardized tooling, teams risk deployment delays, fragile systems, and lack of reproducibility. Deployment frameworks aim to eliminate these inefficiencies by abstracting infrastructure complexities.

What Is BentoML?

BentoML is an open-source framework designed to simplify model serving and deployment. It allows data scientists to package trained models along with their dependencies and expose them as scalable API services. The goal is to provide a consistent workflow across development, staging, and production environments.

Rather than rewriting code for different serving environments, BentoML enables teams to:

- Package models into versioned “Bentos.”

- Automatically generate RESTful or gRPC APIs.

- Containerize services using Docker.

- Deploy across cloud or on-premise infrastructure.

This approach bridges the traditional gap between data science experimentation and DevOps execution.

Core Capabilities of AI Model Deployment Tools

While BentoML is a leading example, it belongs to a broader ecosystem of deployment platforms. These tools generally share several important capabilities:

1. Model Packaging

Packaging involves bundling a trained model, preprocessing logic, configuration files, and dependencies into a reproducible artifact. This ensures that the production environment mirrors the training environment.

Why this matters: Inconsistent environments are one of the most frequent causes of failed deployments.

2. API Layer Generation

Deployment tools typically auto-generate API routes for inference. This abstracts away server configuration and routing logic. Engineers can focus on optimizing endpoints rather than building infrastructure scaffolding.

3. Containerization and Orchestration

Most solutions integrate seamlessly with Docker and Kubernetes. Containerization ensures platform independence, while orchestration enables automatic scaling and high availability.

4. Version Control and Reproducibility

Versioned artifacts allow teams to track changes in models and roll back if necessary. This is especially important in regulated industries where audit trails are required.

5. Monitoring and Observability

Advanced platforms integrate logging, metrics tracking, and performance monitoring. These capabilities help detect:

- Data drift

- Prediction anomalies

- Latency degradation

- System failures

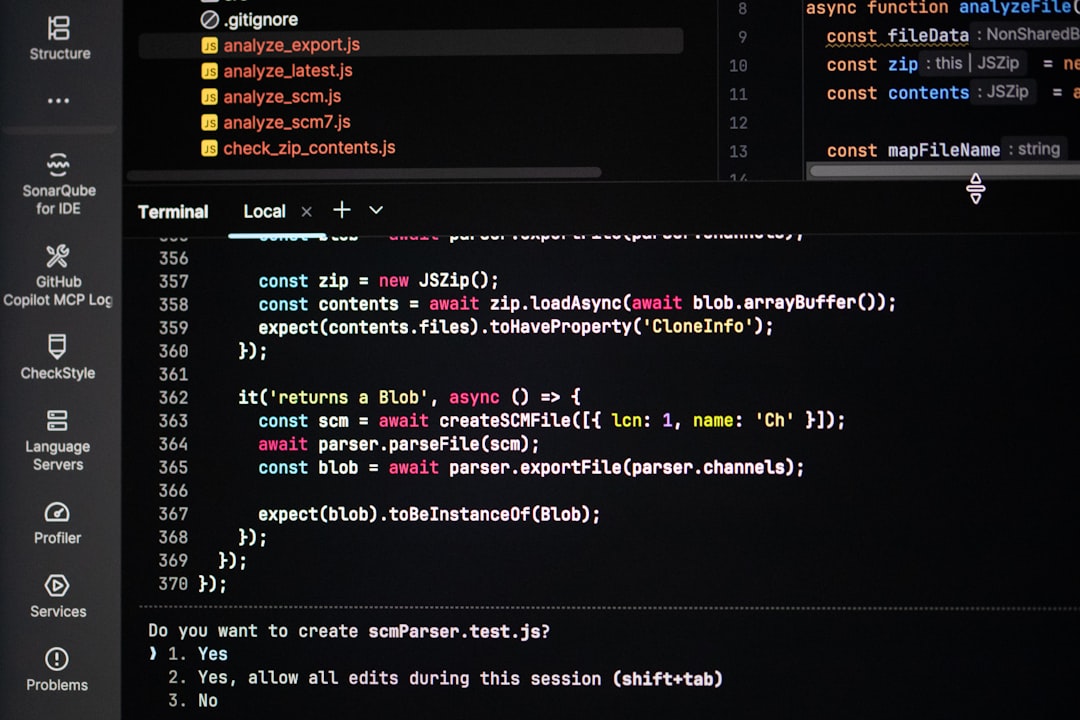

How BentoML Works in Practice

A typical BentoML deployment process includes the following steps:

- Train the model using frameworks such as PyTorch, TensorFlow, or Scikit-learn.

- Wrap the model in a BentoML service definition.

- Build a Bento artifact that captures dependencies and metadata.

- Containerize the artifact into a Docker image.

- Deploy to a Kubernetes cluster or cloud platform.

This workflow standardizes what had previously been a fragmented process.

Comparison of Leading AI Deployment Tools

Several platforms compete in this space, each with different strengths. Below is a comparison chart summarizing common deployment tools:

| Tool | Primary Focus | Container Support | Kubernetes Integration | Best For |

|---|---|---|---|---|

| BentoML | Flexible model packaging and API serving | Yes | Yes | Teams seeking open source customization |

| MLflow | Experiment tracking and model registry | Yes | Yes | Lifecycle management with deployment options |

| Seldon Core | Kubernetes-native model serving | Yes | Native | Enterprise Kubernetes environments |

| Ray Serve | Scalable distributed inference | Yes | Yes | High throughput workloads |

| TensorFlow Serving | TensorFlow model serving | Yes | Supported | TensorFlow-centric systems |

Each platform brings trade-offs. BentoML stands out for its framework-agnostic flexibility and developer-friendly packaging workflow.

Why Packaging Standards Matter

As AI adoption accelerates, organizations often manage dozens or hundreds of models simultaneously. Without standardized deployment patterns, teams encounter:

- Fragmented pipelines

- Inconsistent governance

- High maintenance costs

- Operational risks

Packaging tools introduce discipline into the process. They treat models as deployable software artifacts, ensuring alignment with DevOps best practices such as CI/CD, automated testing, and rollback mechanisms.

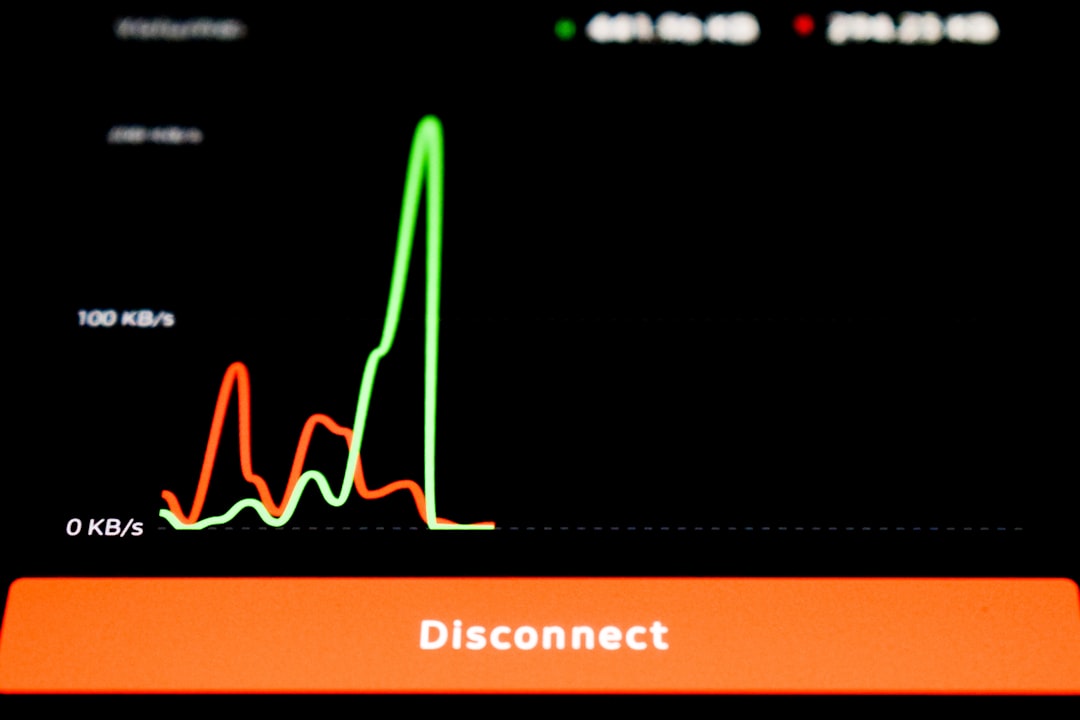

Scaling AI Services in Production

One of the most significant advantages of tools like BentoML is seamless scalability. Modern AI services must accommodate:

- Sudden traffic spikes

- High concurrency

- Low-latency response requirements

By integrating with orchestration systems, deployment frameworks enable horizontal scaling. When request volumes increase, additional containers are provisioned automatically. When traffic decreases, resources scale down to reduce costs.

This elasticity is essential for applications such as:

- Fraud detection systems

- Recommendation engines

- Real-time personalization APIs

- Autonomous decision platforms

Security and Compliance Considerations

Enterprise deployments must address strict security requirements. Deployment tools facilitate:

- Role-based access controls

- Secure API authentication

- Encrypted communication channels

- Audit logging

For industries such as finance, healthcare, and government, the ability to reproduce predictions from specific model versions is not optional—it is mandatory. Packaging systems create traceable artifacts that simplify compliance audits.

Operational Monitoring and Model Drift

Model performance can degrade over time due to data distribution shifts. Deployment tools often integrate with monitoring platforms to track:

- Input feature distributions

- Prediction confidence levels

- Error rates

- Response times

By identifying drift early, organizations can retrain models or revert to previous versions before business impact escalates.

The Strategic Value of Deployment Infrastructure

AI strategy is not solely about algorithms. Competitive advantage increasingly depends on operational excellence. Organizations that build repeatable, scalable deployment processes gain:

- Faster time to market

- Lower production risk

- Reduced engineering overhead

- Improved cross-team collaboration

Tools like BentoML function as force multipliers. They allow data scientists to focus on improving model accuracy while enabling DevOps teams to maintain reliability and efficiency.

Looking Ahead

The future of AI deployment will likely include deeper integration with automated ML pipelines, stronger observability frameworks, and enhanced support for multimodal and large-scale foundation models. Serverless inference environments and edge deployments will also become more common.

As complexity increases, structured deployment tools will move from optional conveniences to essential infrastructure components. The pattern mirrors the evolution of traditional software engineering—where build systems, package managers, and container orchestration ultimately became standardized best practices.

In conclusion, AI model deployment tools such as BentoML provide a disciplined, scalable approach to operationalizing machine learning systems. They resolve one of the most persistent bottlenecks in AI engineering: translating experimental models into dependable services. By unifying packaging, API generation, containerization, and monitoring, these platforms enable organizations to deploy with confidence, maintain compliance, and scale effectively. In a landscape where production readiness defines success, structured deployment frameworks are no longer optional—they are foundational.